by Philippe Makowski, January 2010

Introduction

If I could choose one word to speak about Linux it would be "choice". After choosing the distribution, you have to choose the file system to store your data. Today, we have mainly the choice between Etx2, Ext3, Ext4 and XFS. Let's see whether different file systems have an impact on performance when we use Firebird databases.

Method

To test these file systems, I used TPC-B (http://sourceforge.net/projects/dbbench/) and TPC-C benchmarks [1] against a SuperClassic Firebird 2.5 RC1.

You can find information on the TPC tests at http://tppc.org [1]. In summary, the TPC-C benchmark simulates a large wholesale outlet’s inventory management system and TPC-B is designed to be a stress test on the core portion of a database system. Both run for a limited time and count the number of transactions per minute. Both mix selects, updates and inserts.

All tests were run under a VmWare virtual box with a 30Gb pre-allocated virtual SCSI disk. The virtual box was set up with Mandriva 2010.0 x86_64, and Firebird was built on this system. I did not change anything in the Firebird setup, keeping the default database cache.

The goal is not to benchmark Firebird itself, but to arrive at some benchmarks for the respective file systems and their settings. To have a referential value, I first ran benchmarks with a database on a raw device, i.e., with no file system at all. All results in this article are expressed as relative to the base "100" value obtained with the raw device. I did it this way because I wanted to evaluate not the absolute performance, but the relative performance of the various file system settings.

Results

With default settings

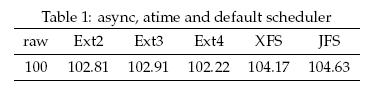

By default, the Linux distribution mounts the directory without the sync option, and with atime option. That means all I/O is done asynchronously and that inode access time is updated for each access. It is certainly not the best setup for a Firebird database if you wanted to be sure that all data are quickly written on disk, but it gives us first figures to compare.

As you can see, with these mount options, JFS is 4.63% faster than the raw device, and all ext file systems have nearly the same performance.

If we really want reliable write and certainty that all I/O is done synchronously, we have to use a different mount option.

With sync option

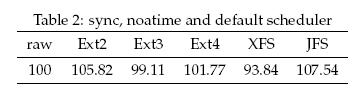

Now, we launch the same tests, but with sync and noatime mount options. That means all I/O is done synchronously and that inode access time is not updated (for faster access).

With these new settings, we see big changes : xfs and ext3 perform badly, and JFS is the winner, faster than in our first tests with default values. The differences are not really big, though, only around 7 points maximum:

Deadline I/O scheduler

The Linux kernel, the core of the operating system, is responsible for controlling disk access by using kernel I/O scheduling. In an issue of Red Hat Magazine [2] we are told:

The Completely Fair Queuing (CFQ) scheduler is the default algorithm in Red Hat Enterprise Linux 4. As the name implies, CFQ maintains a scalable per-process I/O queue and attempts to distribute the available I/O bandwidth equally among all I/O requests. CFQ is well suited for mid-to-large multi-processor systems and for systems which require balanced I/O performance over multiple LUNs and I/O controllers. The Deadline elevator uses a deadline algorithm to minimize I/O latency for a given I/O request. The scheduler provides near real-time behaviour and uses a round robin policy to attempt to be fair among multiple I/O requests and to avoid process starvation. Using five I/O queues, this scheduler will aggressively re-order requests to improve I/O performance.

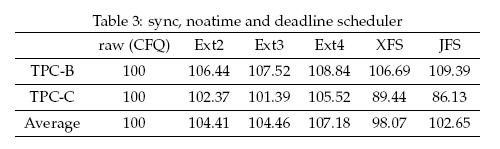

So, let's test the deadline elevator:

No clear winner. The two TPC tests don’t provide the same podium but, clearly, the scheduler has an impact on performance.

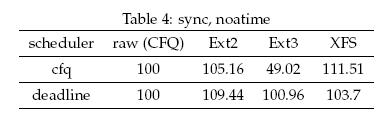

Tests were done with a 2.6.31 x86_64 kernel, two CPUs and 4Gb ram. To see whether the scheduler always has such an impact on performance, I set up a new box with Centos 4, i386 2.6.9 kernel, one CPU and 2Gb ram. A fairly different box, as you see. Let's run the TPC-B test on this new box and see what we get. No JFS and Ext4 this time, because Ext4 is stable only since Kernel 2.6.28 and because JFS is not supported out of the box on RHEL4 or Centos4.

The choice of the scheduler can have a great impact on performance, as we see, but we cannot say that one scheduler is always better than another. We can just say that the scheduler is a point that has to be taken into consideration when you want to get the maximum performance out of your systems.

Conclusion

"Don’t believe benchmarks made by someone other than yourself !"

We have observed that, apart from the scheduler effect on an old 2.6 Kernel and Ext3, there are no big performance differences. The conclusion is that to go after the best performance for your Firebird databases, you have to do your own bench tests first to discover what performs best on your hardware. First, choose the file system you trust for reliability and, later, try to change the scheduler to find the better performer. Keep in mind that there are no general rules! Different hardware and kernel combinations are going to exhibit different behaviour with respect to the scheduler.

References

- [1] ( 1 , 2 ) TPC, Transaction Processing Performance Council, available at http://www.tpc.org .

- [2] D.John Shakshober, Choosing an I/O Scheduler for Red Hat® Enterprise Linux® 4 and the 2.6 Kernel, (June 2005), available at http://www.redhat.com/magazine/008jun05/features/schedulers/